Testing Client-Server Systems

Introduction

In modern world Client-Server architecture has become a standard and it’s applied in the absolute majority of software applications that we use from day to day. Client-Server architecture also known as Networking model allows to decompose a complex system into smaller components where each component is playing it’s own role. Such system may consist of one (Monolith) or many components (Micro-services) on the server side which are responsible for collecting and storing data, maintaining it’s relation and providing access to the processed data to multiple clients, as well as other servers, over the network. And on the client side of the system one or many components handle presentation logic, utilizing the services provided by server side using network requests. Thus the key feature of this architecture is a communication between clients and servers, the messages that are being formatted, sent and received, without which the whole system cannot operate. Making sure that all the messages are properly formatted in the way that is acceptable by the other side and verifying that the contract between client and server is maintained becomes one of the most important areas for software testing.

Usage of Client-Server architecture provides many advantages, such as flexibility, and ability to ship each component faster rather than the entire system at once. On the other hand testing Client-Server systems can be rather difficult due to it’s complex nature where each component is dependent and remains in relation with other components. Quite often the amount of efforts to test such components exceeds the development efforts.

General approach

In a standard Software Development Life Cycle testing starts from the planning phase when requirements are being defined and the contract about the schemas of the messages that are going to be used between client and server is determined. It’s very important to align testing process as close as possible to the development processes on both client and server sides to detect all possible misinterpretations or gaps in the requirements to prevent potential integration conflicts. Even identifying a small problem on the early stage increases the chances that it’s going to be properly fixed instead of creating a technical debt to be resolved in future iterations.

A common mistake made by many companies is to fully rely on Quality Assurance engineers when it comes to testing. Then by the time software is delivered to QA it may already have some significant functional problems that are not easy to fix at that moment but could be easily prevented if QA were involved from the beginning of the process and developers would consider more test scenarios to be covered on the design stage. So testing must be performed as a common effort rather than being a responsibility of a particular engineering department. A well-known way to handle this problem is to follow Test Driven Development (TDD) or Behavior Driven Development (BDD) process when requirements are turned into automated test cases before any development has started. That helps to determine possible use cases and to improve the design of the application by splitting it into smaller and more testable units.

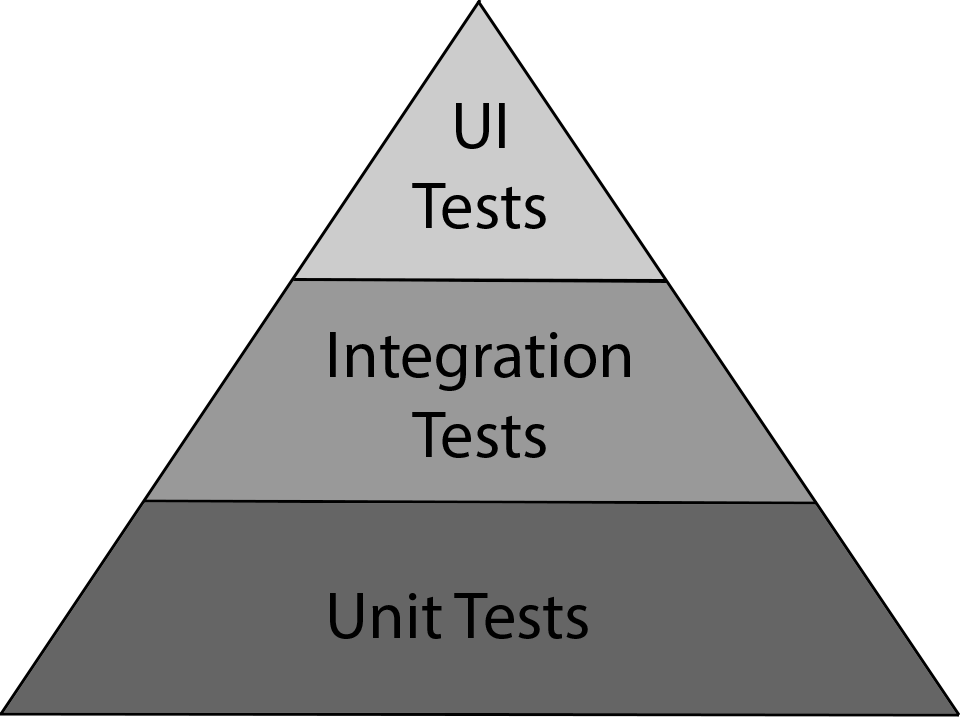

The classic way to describe the ideal testing process in relation to the amount of time and efforts spent on each stage is by analyzing the Testing Pyramid.

Unit tests are very fast and can provide a low level feedback on the current state of functionality of the smallest units of the application. Having a solid unit tests coverage allows to prevent many defects on an early stage and make it easy to introduce new changes without breaking the existing functionality of the component. But they don’t allow to test communication between multiple components.

User Interface tests are the slowest and the most expensive to execute and maintain and tend to be less reliable, when automated. That happens because they are mainly executed against the entire system and interact with many components at the same time. And quite often they just identify that there is a problem in the system without knowing which component has caused the failure. UI tests are good to validate the integrity of a system as a whole during the final stage of testing as well as to identify cosmetic defects and to improve user experience.

Integration tests are performed to verify interactions between different components or units and detect interface differences and inconsistencies. Integration testing encompasses all aspects of a software system’s performance, functionality and reliability.

Integration testing

Integration plays a key part during development of a Client-Server system because such systems are usually assembled from many different components, some of them can be dependent on other components and some could have never been used in a combination before. Unfortunately, often when company’s priorities are driven by strict deadlines there may be not enough time to have a full unit test coverage so that most of the functionality is being tested only on the integration stage.

Testing the integration of the entire system is considered a high-risk as it is rather unpredictable when too many components are integrated together at once and problems can be hard to isolate to find a root cause.

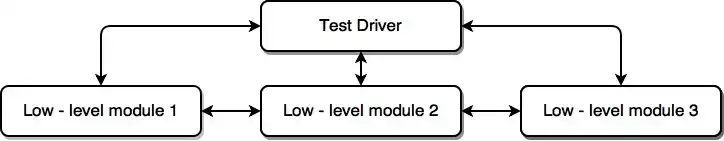

One of the most efficient integration testing approaches is to follow a Bottom-Up strategy, where low level modules and components are combined into small groups and are being tested incrementally adding more and more pieces of the software in a hierarchical order until the entire system is assembled. This allows to isolate unexpected behavior and functional defects to particular modules.

Bottom-Up integration

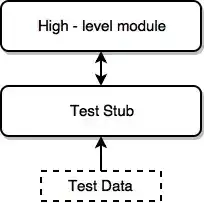

Another approach is a Top-Down strategy, which starts from testing a higher-level group of components. Low level modules in this case are replaced with stubs that act like a real low level module, but feed the actual component with fake data. Incrementally the stubs are later replaced with real low level components until the whole system is put together.

Top-Down integration

Regardless which strategy you decide to follow, you end up verifying the messages that are sent by one module and are parsed through the Application Programming Interface (API) of another module. API is responsible for handling incoming messages (requests) and it needs to be accessible for other modules to be able to use it. So it also becomes critical to keep them well documented, especially if the component is integrated into a large system and is being used by many other components.

While testing the API of a server module in integration with a client module it is important to not only verify that the server is properly responding to the requests, but also the request done by the client to be formatted in the way acceptable by the server and conforming to other clients of that server. Identifying inconsistencies in requests can help to prevent potential regressions when a code change is made in the server module.

Because of the high number of components that a Client-Server system may consists of, interface problems and inter-component conflicts are more likely to happen. Also every component’s interface needs to be tested for non-functional issues, such as load and stress handling, security and performance.

Conclusion

As we can see, Client-Server architecture offers great advantages and opportunities but also introduces big challenges and risks, that need to be properly addressed. To successfully perform testing of such systems it’s essential to have close collaboration of all engineering departments, align test processes with development from early stages and treat testing as a team effort.